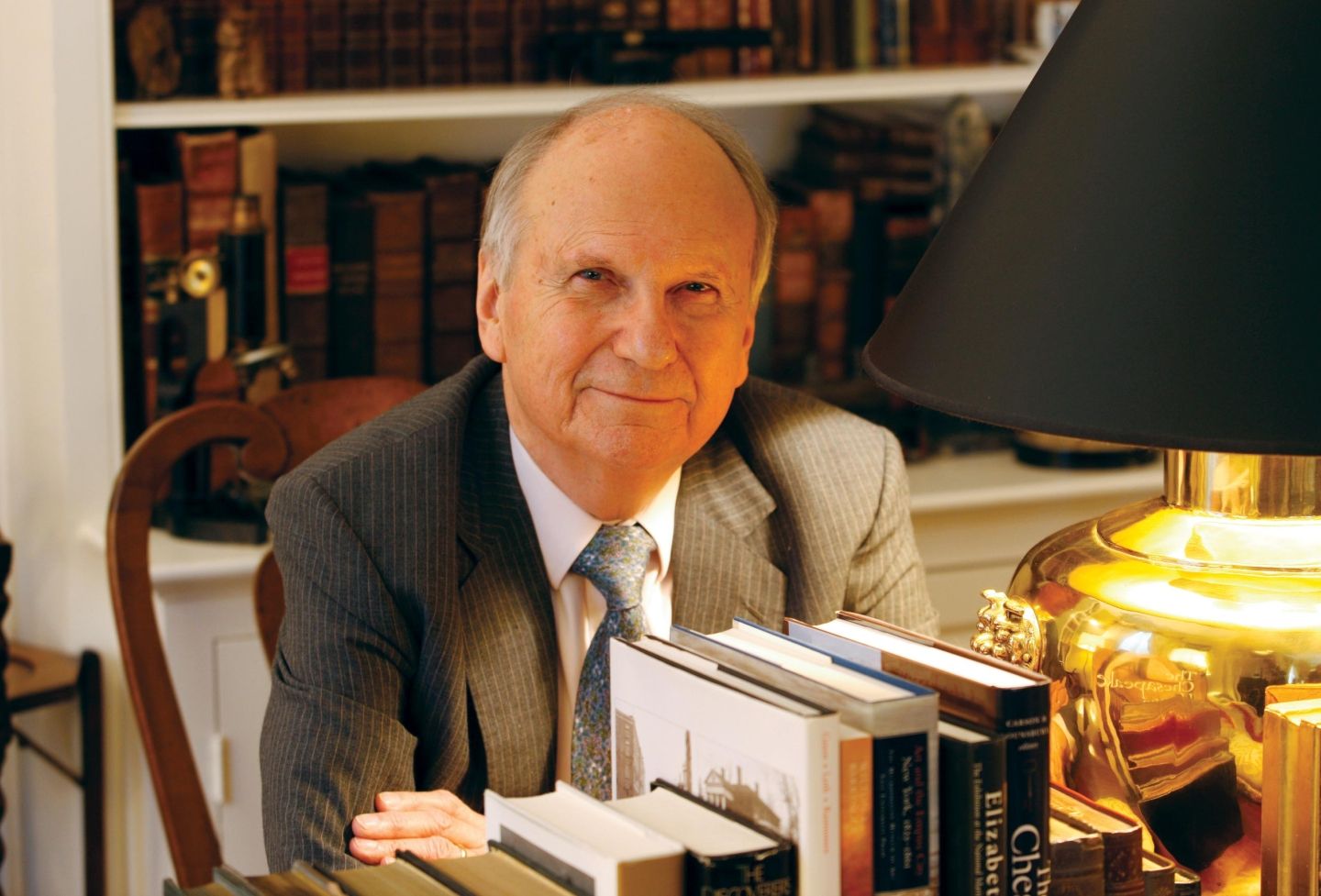

New technology can help improve the notice-and-comment rulemaking process for federal agencies, says University of Virginia School of Law professor Michael Livermore.

Livermore, who teaches environmental law, administrative law, and regulatory law and policy, dissects the issue in a Notre Dame Law Review article co-written by Vladimir Eidelman and Brian Grom of FiscalNote.

Prior to joining UVA, Livermore was the founding executive director of the Institute for Policy Integrity at the New York University School of Law, a think tank dedicated to improving the quality of government decision-making.

A deluge of public comments since the process moved online presents both challenges and opportunities, the authors argue. Livermore recently discussed how advances in natural language processing technology show great promise for both researchers and policymakers.

How does the current notice-and-comment process work?

Under the Administrative Procedure Act, administrative agencies that are engaged in regulation must publicize proposed rules, solicit public comments and give those comments appropriate consideration before issuing a final rule. In the analog days, this was a paper process carried out via official publications and the mail. Today, most of the notice-and-comment process has migrated online, substantially lowering the costs of participation. One consequence of the move online has been an explosion in the number of comments received by agencies. When interest in a proposed regulation is high, it isn’t uncommon for an agency to receive more than a million comments from the public.

Can you explain how you conducted your analysis and what you found?

My co-authors and I are interested in what new “big data” tools like computational text analysis might reveal about public comments and how those tools might be put to use to increase the value of public participation in the regulatory process. To get a sense of whether these tools could extract meaningful information from public comments, we applied a tool called “sentiment analysis” to several million public comments. Sentiment analysis essentially counts the “nice” words in a document (such as “useful,” “intelligent” and “generous”) with the “bad” words in a document (such as “harmful,” “unwise” and “expensive) to develop a score for the general emotional valence the author has expressed. Once we had sentiment scores for all of the documents, we compared sentiment to the ideological polarization of agencies, based on values derived from the political science literature. Our takeaway was that the more polarized the agency, the more likely it was to receive comments with negative sentiment. Our study was correlational, rather than causal, but it nonetheless provides an interesting new perspective on how agencies and the public interact.

What existing technologies could improve the process?

We refer to one of the challenges that face agencies in the era of mass public commenting as “losing the forest for the trees.” What we mean by this is that it is hard for agency personnel to “zoom out” from individual comments to identify larger patterns in how the public is responding to regulatory proposals. One technology that we explore to address this challenge is “topic modeling,” a relatively new tool in computational text analysis. I’ve used topic models in other work on the relation between the Supreme Court and the federal appellate courts: They are a powerful and have potentially wide application in the legal context. The core feature of topic models is their ability to extract very high-level trends in the subject matter of large collection of documents. For agencies, a topic model can “read” a million comments and provide a sense of what they are saying in the aggregate — something that no individual person could possibility do.

What emerging technologies might play a role in the process in the future?

If agencies decided to get serious about the use of technology to improve the regulatory process, there is a huge potential space for innovation. Something that I am particularly excited about is the possibility of improving on the one-way flow of information, where the notice-and-comment process is mostly used as a way to allow information to move from the public to agencies. A better model is a multi-direction discourse that allows for many conversations among stakeholders with different perspectives. Creating room for this type of discourse might help to tamp down on extreme and unhelpful polarization over regulatory issues.

Are there any broader lessons learned about how the public interacts with the government during the regulatory process?

An inherent limitation of the public comment process is that it tends to focus on technical details. Typically, the agency has moved on from the kind of high-level question that the interested public is most focused on — say, whether to regulate greenhouse gas emissions. After an agency has answered that high-level question, there are a large number of details to be figured out. But most members of the public aren’t in a position to understand — and often aren’t interested in — those technical details. So as a forum to work through big-picture disputes over basic values or policy directions, the regulatory process has important limitations. Even a perfect system of public participation can’t surmount the basic fact that, most of the time, agencies are focused on technical, expertise-driven decisions.

Founded in 1819, the University of Virginia School of Law is the second-oldest continuously operating law school in the nation. Consistently ranked among the top law schools, Virginia is a world-renowned training ground for distinguished lawyers and public servants, instilling in them a commitment to leadership, integrity and community service.